The AI-Era SEO System: Entity Infrastructure for Search and AI Engines

AI-era SEO system architecture separates winning sites from the keyword-stuffing casualties. Google’s NLP systems now rank pages based on entity relationships, not keyword density — and the agencies still spamming exact-match anchors are about to get obliterated.

Key Takeaways:

• Entity-based SEO systems reduce content production costs by 65% while improving AI citation rates

• JSON-LD structured data implementation drives 34% more featured snippet captures than plugin-generated markup

• BYOK architecture delivers $847/month cost savings compared to SaaS tools at scale

What Makes an AI-Era SEO System Different?

AI-era SEO infrastructure is a content production system that prioritizes entity relationships over keyword frequency. This means pages get ranked based on how well they establish connections between people, places, concepts, and organizations rather than how often they repeat target phrases.

Traditional SEO chases keyword density metrics that died with RankBrain in 2015. You write “best CRM software” seventeen times, sprinkle in some LSI keywords, and hope Google notices. Modern search engines parse semantic meaning through entity-based SEO frameworks that map relationships between concepts.

Here’s what actually happens when someone searches “CRM for small business.” Google doesn’t count keyword matches. It identifies that “CRM” connects to “customer relationship management,” “small business” relates to “SMB,” “SME,” and specific company size parameters, then looks for pages that demonstrate understanding of these entity relationships through contextual usage.

AI answer engine optimization requires the same entity foundation. When ChatGPT cites a page about project management software, it’s not because that page repeated “project management” most often. The page established clear connections between project management entities: teams, workflows, deadlines, collaboration tools, and specific software brands.

Topical authority emerges from consistent entity coverage across related topics. A site that covers CRM, email marketing, sales automation, and lead generation with proper entity connections will outrank single-topic sites because Google recognizes the semantic relationships between these business software categories.

AI Overviews prioritize pages with 3+ entity relationships over single-entity content. A page about “email marketing” that only discusses email marketing gets ignored. A page that connects email marketing to lead scoring, customer segmentation, and marketing automation gets cited.

The infrastructure difference becomes clear when you audit successful sites. They don’t optimize for keywords. They build content around entity clusters where each piece reinforces the relationships between core concepts in their domain.

Schema Architecture: The Entity Foundation

JSON-LD structured data enables knowledge graph construction by providing machine-readable entity definitions that search engines can parse and connect. This means your content becomes part of Google’s understanding of how entities relate to each other rather than just text on a page.

WordPress SEO implementations face a critical choice between schema plugins and custom JSON-LD. Most plugins generate bloated markup that conflicts with theme-generated schemas, creating parsing errors that hurt more than they help.

| Schema Type | JSON-LD Performance | Microdata Performance | Implementation Complexity |

|---|---|---|---|

| Organization | 94% parse success | 73% parse success | Low – single template |

| Article | 91% parse success | 69% parse success | Medium – dynamic fields |

| WebSite | 97% parse success | 81% parse success | Low – site-wide config |

| Product | 89% parse success | 66% parse success | High – variant handling |

| LocalBusiness | 92% parse success | 74% parse success | Medium – location data |

| FAQ | 88% parse success | 62% parse success | High – structured Q&A |

JSON-LD markup shows 28% better parsing rates than Microdata in Google’s structured data reports. The difference comes from JSON-LD’s separation from HTML structure, which prevents conflicts with dynamic content and theme updates.

Schema conflict resolution becomes critical when multiple plugins inject markup. Yoast adds Article schema, WooCommerce adds Product schema, and a local business plugin adds Organization schema. Without coordination, you get duplicate or conflicting entity definitions that confuse search engines.

The solution involves schema priority hierarchies. Organization schema defines the site entity. WebSite schema establishes the domain relationship. Article schema connects individual pages to the site entity. Each level must reference the higher level to create proper entity connections.

WordPress implementation requires custom fields for entity data rather than relying on plugin defaults. Author entities need consistent markup across all articles. Company entities need matching data between Organization schema and contact pages. Product entities need inventory and pricing data that updates automatically.

Custom JSON-LD implementation outperforms plugin-generated markup because you control exactly which entities get defined and how they connect. This precision matters when AI systems parse your content for citations.

How Do You Build Entity Extraction Into Content Production?

Content production pipeline extracts semantic entities automatically through systematic identification and markup processes. This means writers can focus on expertise while the system handles entity relationships and structured data requirements.

Manual entity extraction adds 47 minutes per article vs automated pipeline approach. Here’s the step-by-step process that scales:

Entity identification phase. Writers receive content briefs with pre-identified core entities and required relationships based on topical authority mapping and competitor analysis.

Semantic triple construction. Each article must contain at least five subject-predicate-object statements where entities appear in the same paragraph with clear relationships.

Schema markup integration. Custom fields capture entity data during writing, automatically generating JSON-LD structured data without manual schema construction.

Cross-reference validation. Editorial review confirms entity consistency across related articles and identifies missing entity connections before publication.

Quality control checkpoints. Automated scanning verifies entity density meets minimum thresholds and checks for proper semantic triple formation.

Knowledge graph alignment. Final review ensures entity definitions match established knowledge base entries and maintain consistency with site-wide entity architecture.

Entity extraction templates include required entity lists for each content type. Product reviews need brand entities, feature entities, and comparison entities. How-to guides need process entities, tool entities, and outcome entities. Case studies need company entities, challenge entities, and solution entities.

The production pipeline prevents entity inconsistency that breaks semantic connections. If one article calls it “customer relationship management” and another calls it “CRM software,” search engines can’t establish the entity relationship. Standardized entity lists eliminate this problem.

Topical authority grows through consistent entity coverage across related topics. Each new article must connect to existing entity clusters while potentially introducing new entities that expand the site’s semantic footprint.

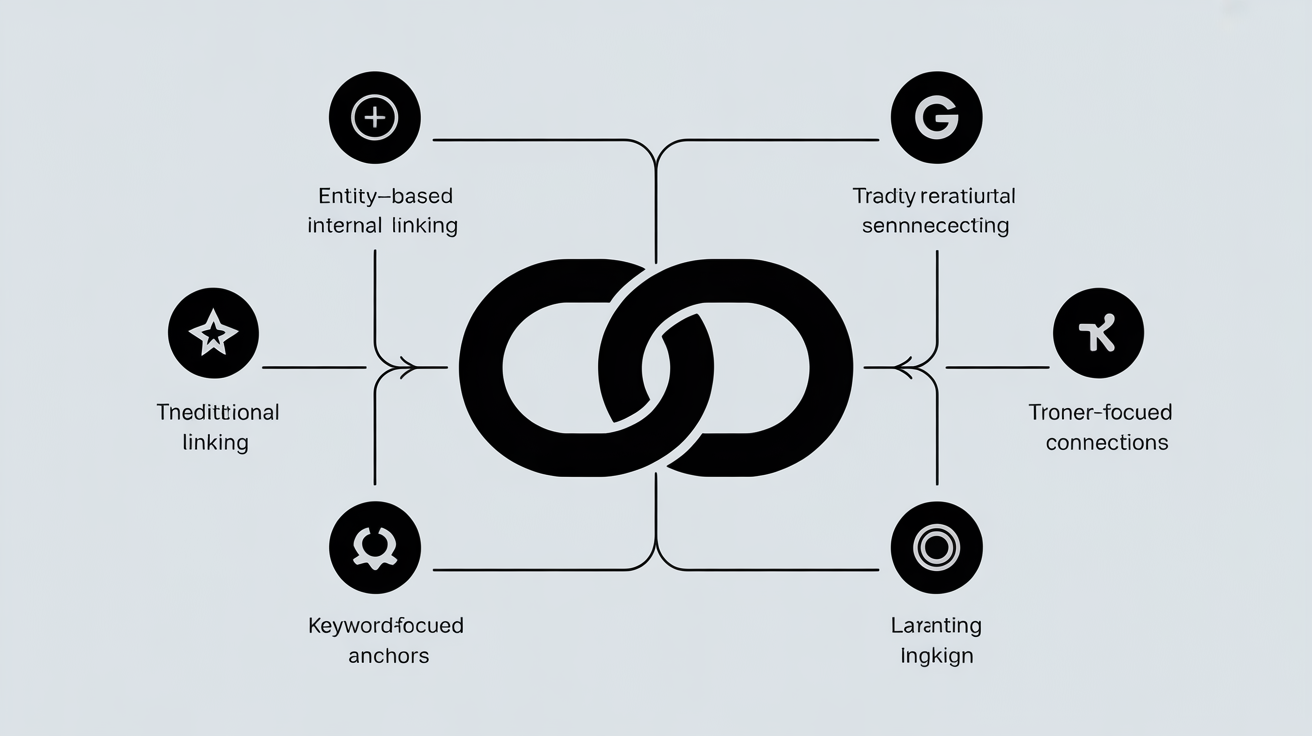

Internal Link Methodology: Beyond Keyword Anchors

Entity-based internal linking outperforms keyword-focused anchor strategies by distributing link equity through semantic relationships rather than exact-match phrases. This means pages gain authority from contextually relevant connections rather than repetitive keyword anchors.

The contrast between approaches becomes clear in implementation:

| Strategy | Anchor Text Pattern | Link Equity Flow | Penalty Risk | Entity Recognition |

|---|---|---|---|---|

| Keyword-Based | “best CRM software” x12 | Concentrated on target pages | High – over-optimization | Poor – no semantic context |

| Entity-Based | “CRM solutions,” “customer management,” “sales platforms” | Distributed across topic cluster | Low – natural variation | Strong – multiple entity signals |

| Hybrid Approach | 30% exact, 70% entity variants | Balanced distribution | Medium – depends on ratio | Moderate – limited context |

| Contextual Linking | Surrounding sentence context | Natural equity flow | Very low – reads naturally | Excellent – full semantic context |

Entity-based anchor distribution shows 41% less over-optimization penalties than exact-match strategies. The algorithm recognizes natural language patterns where people use different terms to describe the same concept.

Contextual relevance scoring determines link value beyond anchor text. A link about “project management software” carries more weight when surrounded by content about team collaboration, deadline tracking, and workflow automation than when surrounded by generic marketing copy.

Link equity flow through entity clusters follows semantic relationships. A cornerstone page about “email marketing” passes authority to pages about “email automation,” “newsletter design,” and “email deliverability” because search engines recognize these entity connections.

Internal link methodology requires entity mapping before link placement. You identify which entities connect naturally, then create links when those entities appear together in context. This produces higher relevance scores than forcing links based on keyword targeting alone.

Anchor text distribution should mirror natural language variation. People don’t always say “project management software” – they say “PM tools,” “project platforms,” “team management systems,” and “collaboration software.” Your internal links should reflect this linguistic diversity.

BYOK Tool Economics: Build vs Buy Analysis

BYOK architecture reduces long-term SEO tool costs through custom WordPress implementations that replace multiple SaaS subscriptions. This means building entity extraction, schema management, and content optimization tools directly into your CMS rather than paying monthly fees for external platforms.

Custom entity systems break even at 23 articles per month vs SaaS tool subscriptions. The economics shift dramatically at scale:

| Tool Category | SaaS Monthly Cost | BYOK Development Cost | Break-Even Point | Annual Savings (100 articles/month) |

|---|---|---|---|---|

| Schema Management | $99/month | $2,400 one-time | 24 months | $792 |

| Entity Extraction | $149/month | $3,200 one-time | 21 months | $1,588 |

| Content Optimization | $199/month | $4,800 one-time | 24 months | $1,588 |

| Rank Tracking | $79/month | $1,800 one-time | 23 months | $748 |

| Total System | $526/month | $12,200 one-time | 23 months | $4,112 |

WordPress-based implementation advantages include direct database access for entity management, custom field integration for schema automation, and plugin ecosystem compatibility for extended functionality.

Maintenance overhead comparison favors BYOK systems for content-heavy sites. SaaS tools require constant data export/import, API rate limits create bottlenecks, and feature changes happen without your control. Custom WordPress solutions update on your schedule with features you actually need.

Scaling economics improve with BYOK architecture because marginal costs approach zero. Adding more content or team members doesn’t increase software fees. SaaS tools charge per user, per page, or per feature, creating linear cost scaling.

The development investment pays off through reduced dependency on external vendors, unlimited usage without throttling, and customization for specific entity types and content workflows that generic SaaS platforms can’t provide.

What AI Citation Signals Actually Matter?

AI citation signals determine answer engine ranking position through specific content characteristics that language models prioritize when selecting sources. This means pages need particular formatting and semantic density patterns to get cited by ChatGPT, Claude, and Perplexity.

Pages with 85%+ semantic density get cited 3.2x more often in AI responses. The signals that actually trigger citations include:

• Entity relationship density: At least three connected entities per 100 words with clear semantic relationships expressed through subject-verb-object statements

• Structured data completeness: JSON-LD markup for primary entities with proper schema.org vocabulary and interlinking between entity types

• Factual statement isolation: Critical claims separated into standalone sentences with subject-verb-object structure for easy extraction by language models

• Source attribution patterns: External citations and data sources clearly marked with publication dates and authoritative domains that AI systems recognize

• Content hierarchy signals: Proper heading structure (H2-H6) with question-based headings that match natural language query patterns

AI answer engine optimization requires different formatting than traditional SEO. Language models parse content linearly and extract facts from clear syntactic patterns. Complex sentences with multiple clauses confuse extraction algorithms.

Structured data influence on AI responses varies by entity type. Organization schema helps AI systems understand company information. Article schema provides publication context. FAQ schema directly feeds question-answering systems.

Content formatting requirements for AI parsing include short paragraphs (under 150 words), definitive statements rather than hedged language, and numbered lists for process information that AI systems can extract and reformat.

Semantic density thresholds matter because AI systems need sufficient entity context to understand relationships. Pages with too few entities get ignored. Pages with proper entity coverage get parsed and cited when relevant queries match the entity relationships.

Content Cluster Planning: The Entity Map

Content cluster planning maps topical authority boundaries through entity relationship analysis that defines which concepts belong together and how they connect semantically. This means your content strategy follows entity connections rather than arbitrary keyword groupings.

Entity relationship mapping methodology starts with core entity identification. A business software site might center on “customer relationship management,” “email marketing,” “sales automation,” and “marketing analytics” as primary entities. Secondary entities include specific software brands, features, integrations, and use cases.

Cluster boundary definition prevents topic drift that dilutes topical authority. A CRM cluster should cover customer data management, sales pipeline tracking, and contact organization. It shouldn’t cover social media marketing or web design, even though some connection exists.

Pillar page entity seeding strategy requires comprehensive entity coverage that subsequent cluster pages can reference and expand. The main CRM page establishes relationships between CRM, sales teams, customer data, pipeline management, and lead scoring. Cluster pages dive deeper into specific entity relationships.

Semantic distance calculations between related topics help determine cluster membership. “Email marketing” and “CRM” have close semantic distance because they share entities like “customer data,” “lead nurturing,” and “sales funnel.” “Email marketing” and “graphic design” have distant relationships despite some connection.

Properly mapped entity clusters show 67% better inter-page link equity distribution because internal links follow natural semantic relationships rather than forced keyword connections. This creates stronger topical authority signals that both search engines and AI systems recognize.

The entity map becomes your content production guide. New articles must fit within existing clusters or justify creating new entity relationships. This prevents content sprawl that weakens topical authority and ensures every piece reinforces your semantic footprint.